AI Is Only as Smart as Your Data …

Why Trusted Intelligence Starts with Data Readiness

Artificial intelligence is rapidly reshaping how businesses analyze information, make decisions, and operate. Executives are being promised a future filled with predictive insights, automated recommendations, conversational analytics, and intelligent business optimization. The possibilities are exciting, and the pressure to adopt AI has become impossible for most organizations to ignore.

Artificial intelligence is rapidly reshaping how businesses analyze information, make decisions, and operate. Executives are being promised a future filled with predictive insights, automated recommendations, conversational analytics, and intelligent business optimization. The possibilities are exciting, and the pressure to adopt AI has become impossible for most organizations to ignore.

But beneath all the excitement lies a growing reality that many businesses are only now beginning to fully understand: AI is only as trustworthy as the data behind it.

That realization is changing the conversation around modern analytics. While many companies initially viewed AI as the solution to their operational and reporting challenges, forward-thinking leaders are recognizing that AI alone cannot solve fragmented data environments, inconsistent business definitions, or disconnected operational systems. In fact, AI often exposes those weaknesses more dramatically than traditional reporting tools ever did.

This is why businesses increasingly need modern data management and analytics platforms. Not simply to generate dashboards or reports, but to create the trusted, governed, business-ready data foundation required for AI to produce meaningful, actionable insight.

The Growing Gap Between AI Ambition and Data Reality

Across nearly every industry, organizations are investing heavily in AI-enabled tools. ERP vendors are embedding AI into enterprise applications. Business intelligence platforms now feature copilots and conversational interfaces. Analytics vendors are promoting predictive models capable of uncovering hidden operational opportunities.

Yet many businesses are discovering that AI-generated insights can quickly become unreliable when the underlying data environment lacks consistency and context.

The reason is simple. Most organizations still operate with fragmented data ecosystems built over years — and sometimes decades — of disconnected growth. Customer information may live in CRM systems. Financial data resides inside ERP applications. Supply chain metrics come from warehouse, logistics, and procurement systems. Sales performance data may exist across spreadsheets, e-commerce platforms, and operational databases. Marketing maintains separate campaign and demand-generation datasets.

The reason is simple. Most organizations still operate with fragmented data ecosystems built over years — and sometimes decades — of disconnected growth. Customer information may live in CRM systems. Financial data resides inside ERP applications. Supply chain metrics come from warehouse, logistics, and procurement systems. Sales performance data may exist across spreadsheets, e-commerce platforms, and operational databases. Marketing maintains separate campaign and demand-generation datasets.

Meanwhile, different departments often define the same business metrics in entirely different ways. Finance may define profitability differently than Sales. Operations may calculate inventory turns differently than Supply Chain teams. Regional divisions may apply inconsistent revenue recognition rules. Product hierarchies may vary across systems. Even basic operational KPIs can become inconsistent from one department to another.

Then leadership asks AI to identify risks, recommend strategic actions, improve forecasting accuracy, or optimize operational performance.

Without trusted, harmonized data beneath those requests, AI is being asked to create certainty from inconsistency.

The issue is not that AI lacks sophistication. The issue is that AI cannot independently resolve conflicting business logic, incomplete context, or poorly governed operational data. AI models process information exactly as it is presented to them. If the data environment contains inconsistencies, contradictions, duplication, or fragmented definitions, those weaknesses become embedded within the AI-generated output itself.

Why AI Often Amplifies Existing Business Problems

There is a misconception that AI somehow “fixes” data problems. In reality, it often magnifies them.

Traditional reporting environments already struggle when departments rely on disconnected systems and inconsistent definitions. AI simply accelerates the speed at which those inconsistencies surface. Because AI tools can generate insights rapidly and at scale, they may unintentionally spread flawed assumptions, misleading analyses, or contradictory recommendations throughout the organization.

This becomes especially dangerous in operationally complex industries like manufacturing and distribution, where business decisions depend on understanding intricate relationships between customers, suppliers, inventory, margins, production performance, transportation costs, and demand patterns.

For example, if one system categorizes customers differently than another, AI-generated profitability recommendations may become distorted. If inventory calculations vary across operational systems, forecasting and replenishment recommendations may be unreliable. If supplier performance data lacks standardization, AI-driven procurement insights may point leadership in the wrong direction.

The result is often an erosion of trust.

Executives begin questioning why different reports produce different answers. Departments lose confidence in analytics outputs. Decision-making slows because leadership spends more time debating data validity than acting on insight.

At that point, the organization does not have an intelligence problem. It has a truth problem. And AI cannot solve a truth problem on its own.

Why Centralized Data Alone Is Not Enough

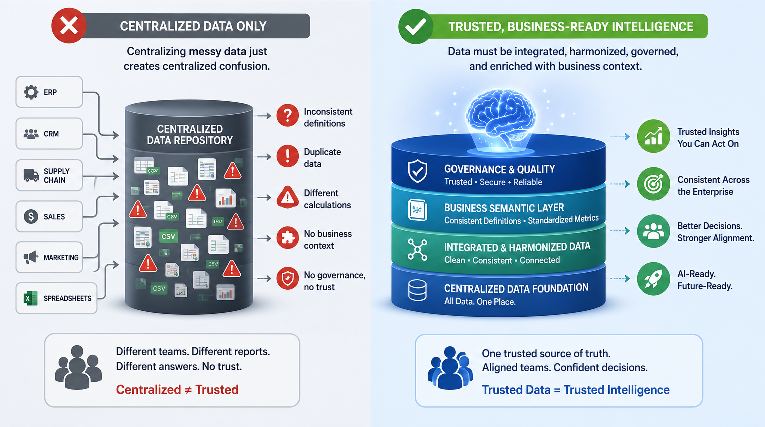

Many organizations believe the answer is simply centralizing data into a cloud repository or lakehouse environment that can be consumed by any intelligent tool. While data centralization is important, it is only one piece of the larger puzzle.

Moving fragmented data into a single location without cleansing inconsistencies, aligning definitions, applying governance, and embedding business context merely creates centralized confusion instead of distributed confusion.

True data readiness requires much more than aggregation.

Organizations need environments capable of integrating operational data from multiple systems while simultaneously harmonizing, standardizing and turning that raw information into data that reflects the actual “language” of the business.

Business definitions must align enterprise-wide. Relationships between operational entities — customers, products, suppliers, geographies, time periods, and financial structures — must be clearly established and maintained. Equally important, businesses need an analytics environment capable of preserving and applying organizational context so that AI systems and reporting tools interpret information consistently. To reach this end, business logic like tax structures, discount tiers, revenue recognition rules, and more, needs to be applied automatically as data flows through it.

This is where a modern data management and analytics platform becomes essential.

The Strategic Importance of the Semantic Layer

One of the most critical components of a modern analytics architecture is the semantic layer — a capability many organizations still underestimate.

A semantic layer acts as the business translation framework between raw technical data and meaningful operational insight. It defines how business metrics should be interpreted, how calculations should be applied, and how relationships between datasets should function across the enterprise.

In practice, this means ensuring that concepts like revenue, margin, customer profitability, inventory valuation, supplier performance, and operational KPIs are defined consistently across all the business.

This consistency is vital because AI systems do not naturally understand organizational nuance. They do not inherently know how a business defines “active customer,” “available inventory,” or “gross margin contribution.” Without embedded business logic and semantic consistency, AI tools are forced to interpret raw data structures at face value.

That often leads to recommendations that appear intelligent on the surface but fail to reflect operational reality.

A governed semantic layer changes that dynamic entirely.

By embedding business context directly into the data environment, organizations create a trusted intelligence framework where both analytics tools and AI systems can operate with consistency, clarity, and explainability. Metrics become aligned. Relationships become transparent. Reporting becomes trustworthy. AI-generated recommendations become significantly more actionable because they are grounded in the operational realities of the business itself.

The Future Belongs to Organizations That Build Trusted Intelligence

The companies that will succeed with AI over the next decade will not necessarily be the ones with access to the most AI tools. AI capabilities will increasingly become embedded into nearly every enterprise platform.

The true differentiator will be the quality and trustworthiness of the data ecosystem supporting those tools.

Organizations that establish unified, governed, business-ready intelligence environments will gain enormous advantages. They will make faster decisions with greater confidence. They will reduce operational friction caused by inconsistent reporting. They will improve forecasting accuracy. They will uncover cross-functional insights that siloed systems cannot reveal. And most importantly, they will position themselves to leverage AI responsibly and effectively at scale.

This is precisely why data management and analytics platforms like Silvon’s Stratum matter now more than ever. In the years ahead, the winners will not simply be those companies that adopt AI first. They will be the organizations that already have strong data foundations in place to support both trustworthy and actionable outcomes from their AI and other business intelligence solutions.